Start a blog – Create your own site

So, you are interested in starting a blog. Read on to learn step by step how to start a blog and all of the dos and don’ts to save you time, money and heartache. You do not need to be a web expert, in fact even if you are a complete novice, this guide with take you through the steps and hopefully answer some of your questions along the way.

What is a blog?

A blog is really just a website that is set up and run by an individual or small group of people. It is regularly updated with new material, usually written, but can include pictures or videos (a video blog is shortened to Vlog), in an informal or conversational style. In this article, I use blog, site, and website interchangeably.

Why start a blog?

Starting a blog can be very fulfilling. There are as many reasons why people want to start a blog as there are blogs. A few of the major reasons are:

- As an outlet to express themselves as a writer or artist;

- Establish a community for something that they are passionate about; or

- Earn extra money.

A blog is an inexpensive way to achieve those things.

Is blogging on someone else’s website (for free) a good option?

When you start blogging you can choose to set up and blog your own website or use someone else’s for free. There a number of different sites that you can blog on for free the best of them are:

(not to be confused with wordpress.org a content management system for your own self-hosted blog)

Advantages of free blogging sites

The main advantage of these sites is that they:

- are free

- are easy to set up (no coding)

- fast

- website design is ok

Disadvantages of free blogging sites

The main disadvantages of free blogging sites are:

- you don’t own the site so if the site owner changes something you are stuck with it.

- the owner can delete your blog (a common occurrence) and you have to just accept it.

- you will still have their domain/website name as part of your blog, like this:

- your-blog-name.blogspot.com

- your-blog-name.wordpress.com

- limited functionality and customization options (you are limited to only a few add-ons and many are not free).

- the site owner can have advertisements on your blog.

- your blog looks unprofessional.

Starting your own website

Most people who continue blogging for a longer period of time opt to start their own website. With your own website, you have control over it and can do much more than you can with a free blog. If you are planning to blog long term and can afford the expense (the cost low but not zero) then you will most likely choose this option.

The key steps to starting a blog are:

- Deciding on your topic;

- Selecting and getting a domain name;

- Selecting and getting web hosting;

- Selecting and installing a website content management system (CMS);

- Selecting and installing a website theme;

- Customizing and organizing your site;

- Creating great content; and

- Getting website visitors.

What is your site about?

The best websites have a purpose. Before you start your website you may want to think carefully about what the purpose of your website will be. If you already have a business or something that you want to promote then the purpose may be to promote that. However, sometimes it is not clear what the purpose of the website is. You may just want to “get online” or “make money online”. If you want to just get online, then you may need to think about why? If you want to make money online then you may need to think about how you intend to make money through your website. There are many different ways that you can make money online but you should probably have a plan before you start your blog. You should also think about how you will monetize your site so that you can earn some extra cash to keep your site going and if it takes off it can also end up turning into a full-time income.

Woodworking niche example – reviewing cabinet table saws

You probably want to choose a niche. For example, if you choose “woodworking” you could talk about different things you can make and review and recommend woodworking tools. It helps if the products associated with the niche are a significant amount of money so that you can earn affiliate commissions but not so expensive that noone will buy it online. Like a house or car you will want to look at and try it before buying. However, woodworking tools, miter saws, drills, band saws or table saws from our woodworking example are the type thing that people do by online and are suitable for affiliate marketing. A good real example of this is reviewing cabinet table saws. This review is in depth and provides relevant information for someone who wants to buy a cabinet table saw on a set budget.

You can do some research about your proposed website topics. There is a wide range of tools to have you. These tools can help you understand the terminology used when people are talking and search about your topic online. They can also give you an idea of the amount of interest from people using the web about the topic. This type of research is often called “keyword research” because people use these keywords when searching the web with google, bing or other search engines.

Keyword research tools

These are free tools. You will need a google account (email) to sign up for Google Adwords. You can type in a keyword and these tools can give you other keyword suggestions and estimated amount of searches.

Getting a domain name

What you will call your blog and domain name is the next step.

There are a lot of different websites where you can buy a domain name. The major providers charge about the same amount to register a domain name, they may be $1-3 dollars different. The major domain sellers/registrars also provide web hosting. So unless you are really low on cash it is easier just to use the same provider for both your domain name and hosting. This will also make setting things up a lot easier especially if you are new to this type of thing. The major providers include:

- Godaddy

- Bluehost

- Host gator

- 1&1

- Ipage

- WP Engine

Some of these even offer a free domain when you sign up for their hosting.

Domain name extension / suffix

The domain name extension or suffix is what is after the [dot] in the domain name (e.g. for google.com the extension or suffix is “.com”). There are different extensions for the different types of websites and their location.

By location

If you are in the USA the default is “.com” but in the UK the default is “.co.uk” in Australia “.com.au” and so on. If you are targeting visitors in a certain country you probably want to use the extension for their country as it is a signal to visitors that your website is relevant to them.

General and topic extensions

There are a lot of extensions that are are not country targeted and are very general or very specific. For example, there is “.info” and “.net” that are very general and then there are newer types that are very specific to a topic such as “.lighting” or “.car”.

Special suffixes

There are limitations on who can register certain types of domain extensions, for example, you need to be a government organization to register the “.gov” extension and an educational institution to register the “.edu” extension. So don’t bother trying to register those types of domain extensions.

The best domain extension type

The best domain extension types will depend on your situation. It is best to go with an extension relevant to a specific country if you only target people in that country. If you want visitors from all around the world or are in the United States then the “.com” is the best option. However, domain name (before the “.com”) you want may not be available. This is because “.com” is the most common and if people are typing trying your domain name to get to your site then chances are they will type the “.com” version.

The “.com” extension is the best if you are a business because “.com” is the most common type. If people are typing trying your domain name to get to your site then chances are they will type the “.com” version. If you are not the “.com” version then they will end up on someone else’s site. See more about choosing a domain name extension.

Brand vs descriptive domain names

When naming your website and domain there are two options:

- use a descriptive name (like Cars) that tells people what your site is about; or

- a brandable name (like Google) that does not mean anything except your brand or website.

The usual way is that if you will call your site/blog “Cars” then you try to get a domain that is similar as possible like “cars.com”. the same applies to brandable names.

There are pros and cons of each approach. However, I recommend a brandable name as a safer long-term option.

Pros and cons of a descriptive name

In some cases, a descriptive name (like cars.com) can be an advantage if you are trying to get found for that keyword phrase through search engines as the search engine may think that people are searching for the name of your website. However, what ranks well in search engines (e.g. google and bing) is constantly changing and in the future descriptive site names may go out of favor. Because in the past people have used descriptive names to trick search engines into ranking their site when it did not provide real value to its viewer descriptive names have received a bad reputation recently. There are a lot of factors that influence search engine ranking so even if you have a descriptive domain name you may have trouble ranking in the search engines.

A descriptive name like “Cars” can tell your audience what your site is about but can also be limiting if you decide to branch out into other topics else like maybe Boats. Do you want to find out about boats from a site about cars?

Descriptive names not as memorable as brand names.

Another problem is that most of these short and descriptive domain names are already taken.

Pros and cons of a brandable name

A brandable site name (like google.com) can make you stand out and be memorable if you develop your brand well. Unlike a descriptive name your site could be about any topic and it will not seem strange if you expand the range of topics that you write about.

There are an almost infinite amount of potential brandable names that are available to be registered.

Search engines like to rank and send people to real websites that provide real value and have and will have a long-term presence. By building a brand that people recognize and search for you may gain an advantage in the search engines.

I recommend that you use a brandable name and build up your brand over time. You could be the next Google, Huffington post, Facebook or [insert your favorite brand here].

Getting website hosting

After you have chosen a website name and registered your domain name the next thing that you need is web hosting. There are many different types of web hosting and even more companies that can provide web hosting. The major types or categories of web hosting are:

- shared hosting;

- cloud hosting;

- VPS hosting;

- cloud-based hosting; and

- dedicated hosting.

See more info about web hosting types.

Most people when they first start their blog or website start with shared hosting.

Selecting and installing a content management system (CMS)

Once you have registered a domain name and have hosting you need to install a content management system. Most shared hosting providers will give you access to “Cpanel”. Cpanel is a menu interface that helps you set up and manage your website(s) and email address.

Most web hosts have an option for you to auto install a content management system there is usually a number of different options. The CMS installer may be called “instaltron” or “Softaculous” or something else depending on your hosting provider. The common types of CMS are:

Each has their own advantage and disadvantages. However, one CMS stands out above the rest WORDPRESS! It is by far the easiest and most flexible system, especially for the beginner. WordPress is the most common CMS used by websites. You really don’t need to think about it, just choose and install wordpress.

Installing a wordpress theme

When you install the wordpress content management system (CMS) you may have been asked to choose and install a theme or the installation may have just given you the default theme. Even if you did choose a wordpress theme as part of installing wordpress you will probably want to choose another theme that better suits the way that you want your website to look and function. There is a wide range of free wordpress themes. These can have good design and functionality.

Furthermore, the functionality of wordpress themes can be extended by what is called plugins and widgets.

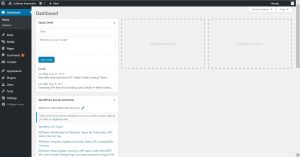

Installing themes, customizing and extending your theme with plugins and widgets is all done through the wordpress dashboard.

You can log into your dashboard by adding /wp-admin to the end of your website domain name.

Creating great content

Now you are set to start blogging. So how do you create great content? Creating great content is a massive topic in itself. However, the two most important things that you need is to capture and hold your reader’s attention and provide value to the reader. In addition to writing great content, you can use images to engage your audience. Read more about creating great content.

Getting visitors

Now that your website is all set up you need some visitors. Unfortunately, just building a website does not make people come to it. “If you build it they will come” does not apply to a website. You need to promote your website. One of the best ways to promote a new website is through social media. Sign up for the major social media accounts where you think that your audience also uses. The major social media sites that have a lot of visitors include:

- YouTube

Once you know which social media sites your audience usually use, sign up, complete your profile (especially linking back to your website) and share snippets of your content on the social media site with a link back to your site. If you have interesting content people with click the link back to your website and read the whole article.

Building an audience

Once you are starting to get some visitors come to your website you want to have a way to contacting them. This way you can tell them about new content on your website or a new product or service that you may have in the future. One of the best ways to do this is to collect emails by asking visitors to sign up for your newsletter or offering them something for free (a free ebook that they need to enter their email and sign up for your newsletter before they can download it). There are a number of free and paid wordpress plugins that you can use for this. This way you will build up your audience over time by continually adding to your email list.